Table of contents

Table of contents

The founder's guide to validating product-market fit before writing code

Summary

In this article:

- Why most teams build the wrong thing, and how to stop doing it

- What product validation actually means in 2025

- How to test a product idea without a developer

- The real cost of skipping validation

- A practical product validation scoring framework

- How to use AI prototypes for product concept validation

- Tools that speed up the process

- Q&A with Mathias Davidsen, GM of Prototypes at Miro

TL;DR: Most teams validate too late, after months of building rather than before. The fix is to test your riskiest assumption early using visual prototypes, honest user conversations, and a simple scoring framework. AI tools like Miro Prototypes let you go from concept to clickable flow in minutes, without writing a line of code. This guide covers the full process, including a step-by-step validation sequence and a Q&A with Mathias Davidsen, GM of Prototypes at Miro, on how to get the most out of AI prototyping for validation.

Collaborative AI Workflows

Join thousands of teams using Miro to build the right thing, faster.

Product validation is one of those topics that sounds obvious until you look at how rarely it actually happens. Most product teams know they should test their assumptions before committing to a build. Most of them do it anyway, just much later in the process than they should, and usually only after something has already gone wrong.

The result is familiar: a product or feature that took months to ship, used by far fewer people than expected, solving a problem that turned out to be less urgent than it seemed. Not a catastrophic failure, necessarily, but a quietly expensive one.

This guide draws on insights from Miro's recent webinar, "From ideas to validated flows: AI prototyping for design and UX teams," hosted by Shipra Kayan, Principal Product Evangelist at Miro, and Mathias Davidsen, GM of Prototypes at Miro. It covers what product validation actually requires, how to do it faster using visual prototypes and AI tools, and what experienced product teams have learned about making it a consistent part of how they work.

Whether you are validating a brand-new product concept or testing a feature bet inside an existing product, the same principles apply.

Why teams skip validation, and pay for it later

There is a reason product validation gets treated as optional in the early stages. When you are moving fast and genuinely excited about an idea, stopping to test your assumptions can feel like slowing down. The assumption is that validation comes after you have something to show, not before.

But the cost of building the wrong feature compounds quickly.

A single misguided sprint costs two weeks of engineering time. A misguided quarter costs three months of runway, team morale, and the opportunity cost of everything else you could have built. And that is before you factor in the rework: the refactoring, the revised specs, the extra coordination, and the information architecture debt that accumulates every time you ship something users did not actually need.

During the webinar, Kayan made a point that is easy to overlook: the problem is not just the features nobody uses. Once you have shipped five features across five different pages, you and your engineering team have to maintain that code indefinitely. The information architecture gets more tangled with every release. Low user adoption is the visible symptom. The underlying problem is structural.

Teams that keep building without validating also tend to lose confidence in their own judgment over time, and lose the trust of stakeholders who have watched too many launches underperform.

What product validation actually means

Product validation is not the same as user research, and it is not the same as building an MVP. It is the structured process of testing your core assumptions before committing to a solution.

At its most basic, product concept validation answers three questions:

- Is this a real problem? Do enough people experience it, and is it painful enough that they would change their behavior to solve it?

- Is this the right solution? Does your proposed approach address the problem in a way that makes sense to users?

- Will people actually use this? Is the solution intuitive and trustworthy enough to adopt?

You can run validation at any stage, but the earlier you do it, the cheaper it is. Testing an assumption on a sticky note costs almost nothing. Testing it in code costs a sprint.

The problem with vibe coding as a validation strategy

One of the most significant shifts in product development over the last two years is how cheap it has become to generate working prototypes. AI coding tools mean you can go from a prompt to a functional interface in an afternoon. That sounds like it should make validation easier. In practice, it introduces a new risk.

Kayan described this tension clearly during the webinar. The traditional product development process had natural friction built in: research findings, sticky note ideation, wireframes, and then progressively higher-fidelity artifacts. Each step required effort, and that effort gave teams a reason to pause and align before moving to the next stage. When coding was expensive, you thought harder before committing to a direction.

Now that you can generate a polished-looking interface in hours, that friction is gone. And the absence of friction is not always a good thing.

Kayan described a pattern she hears from designers consistently: vibe coding often starts with the solution rather than the problem. The tool produces something that looks finished and impressive, so people treat it as finished and impressive, even when the thinking behind it is still shallow. The prototype looks high-fidelity, but it lacks depth, because all that went into it was a brief prompt.

"Designers don't miss it," Kayan said during the webinar. "But other people looking at these prototypes sometimes will miss that it's lacking depth of thinking."

This matters for product validation because the goal of a prototype at the early stage is to generate honest feedback, not to impress people. A prototype that looks too finished often discourages the kind of critical response that makes validation useful.

How to test a product idea without a developer

Some of the highest-signal validation happens before a single line of code is written. Here is a practical sequence for idea validation that works whether you have a technical co-founder or not.

1. Write down your riskiest assumption

Before you build or test anything, get specific about what you are actually assuming. Not "people want better project management tools," but something more specific: "Product managers at companies with 50 to 200 employees will pay for a tool that surfaces blockers in their team's workflow before the weekly standup."

That is an assumption you can test. The generic version is not.

2. Talk to people who have the problem before you mention your solution

The most common validation mistake is leading with the solution. "I'm building X, what do you think?" is not a validation question. It is a pitch.

Instead, ask people to describe the problem in their own words. Ask how they are currently handling it. Ask how much time or money it costs them. If they are not already trying to solve it with something, that is a signal worth taking seriously.

3. Show a visual, not a description

There is a meaningful difference between describing a product concept and showing it. People respond more honestly, more specifically, and with more useful feedback when they can see something rather than imagine it.

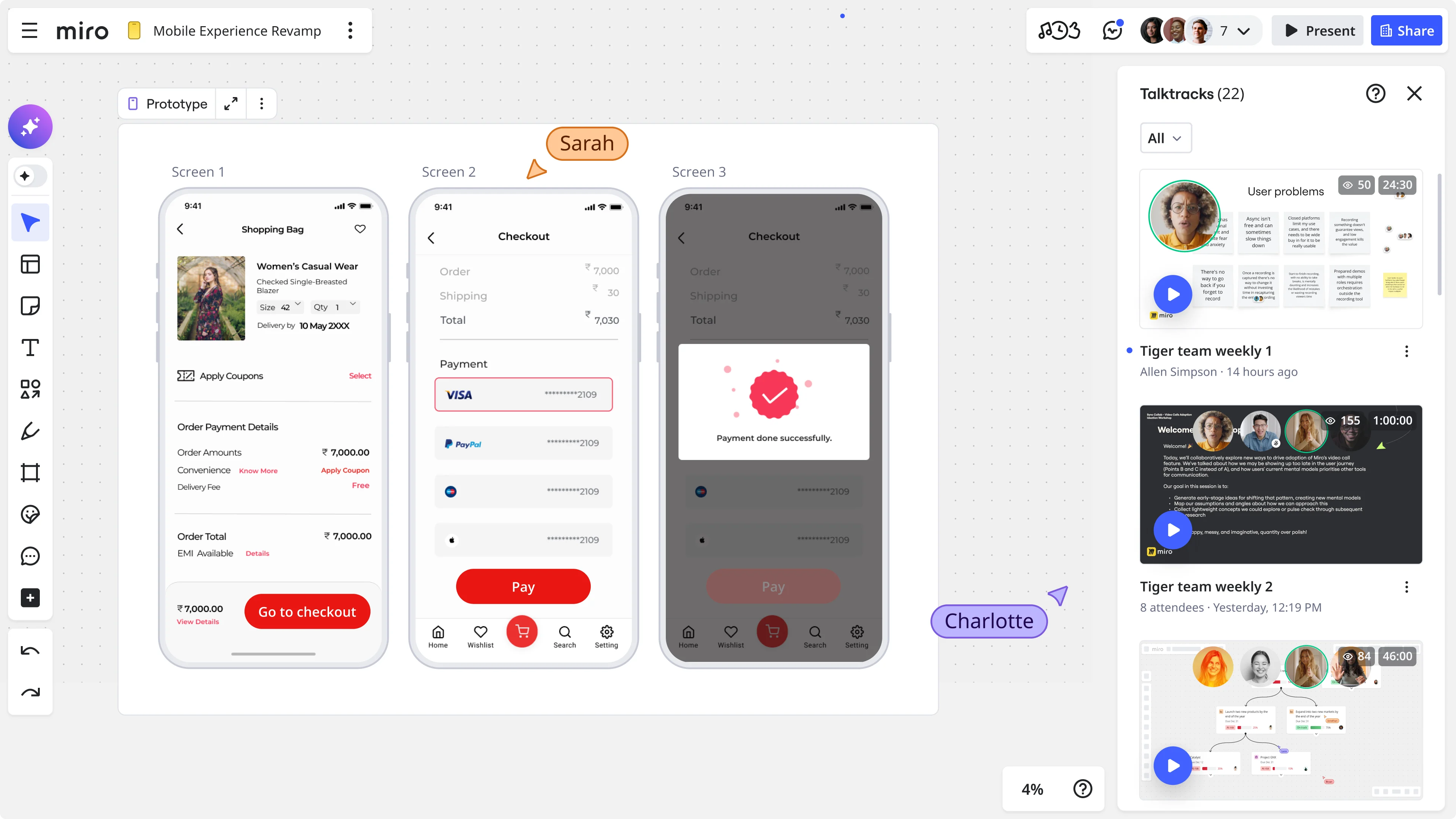

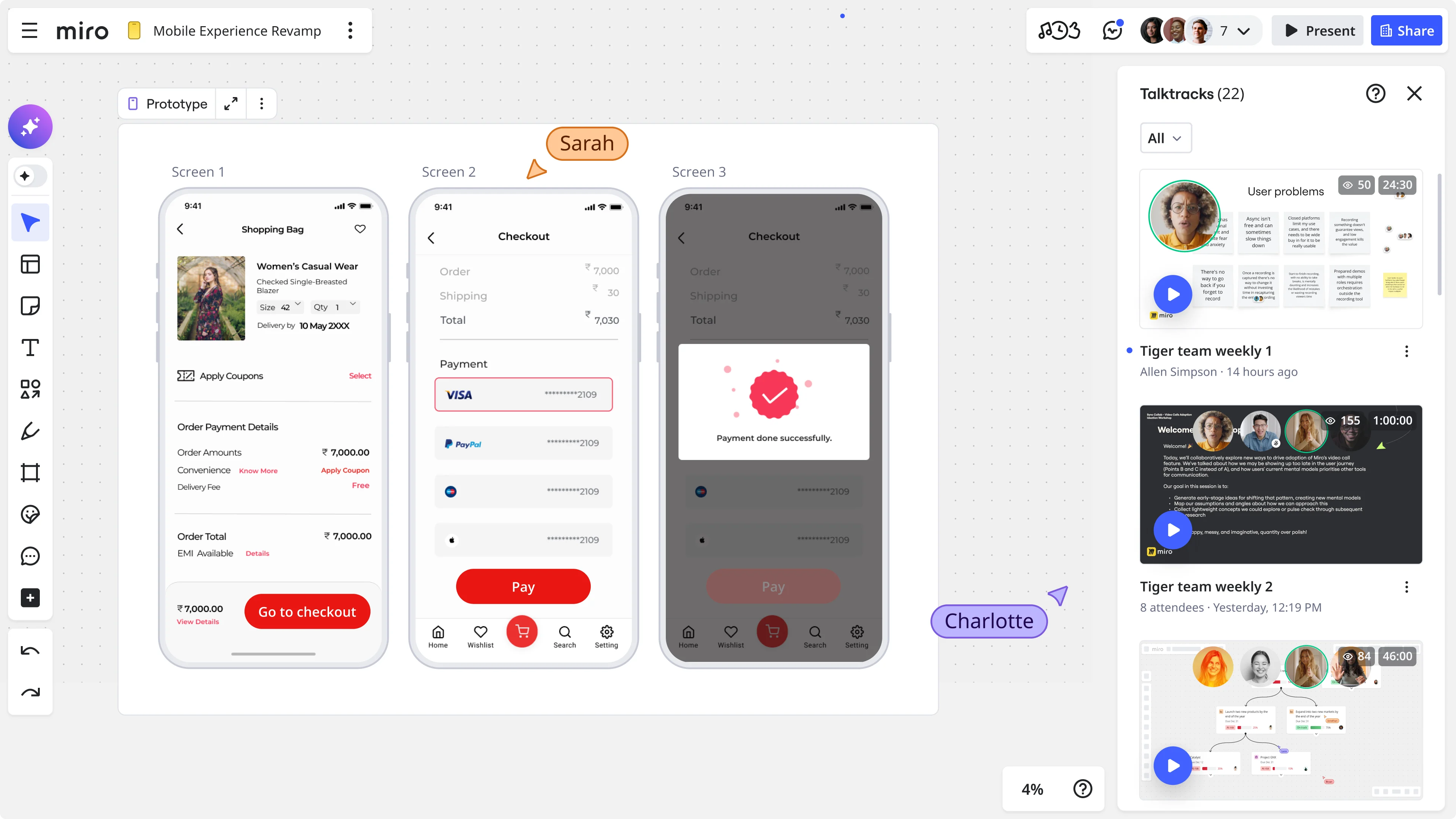

This is where lo-fi visual prototypes earn their keep. A clickable flow built in Miro, a rough screen sketch, a diagram showing how the user journey would work: all of these give people something concrete to react to without requiring any development work.

Björn Ehrlinspiel, Product Owner at Miles & More (part of Lufthansa Group), described this dynamic after his team used Miro Prototypes to validate a product direction: "I'm way more confident that the things we are implementing for the product are the right things. And I'm way more confident to bring that also in front of management. Miro Prototypes helps me show my vision to the management team of the product."

His team created, validated, and aligned on a solution direction in less than one day.

4. Test with people who can say no

The people who give you the most useful validation are the ones who have nothing to lose by telling you it is wrong. That means potential customers, not people in your network who want to be supportive. The goal is not confirmation. The goal is the fastest possible path to truth.

Product validation testing: what to actually measure

Once you have something to show, you need a way to evaluate what you are learning. Rather than collecting vague impressions ("they seemed interested"), you want to test specific hypotheses and track whether your prototype is passing or failing against them.

A basic product validation testing approach covers four dimensions:

Dimension | Core question | What you're testing | When it matters most |

Desirability | Do people actually want this? | Whether users are excited, indifferent, or opposed. This is the "is this a real problem" test. | Earliest stages, before anything is built |

Usability | Can people figure out how to use it? | Where users get confused, slow down, or stop. This is the "does our solution make sense" test. | Once a prototype exists and can be clicked through |

Viability | Would this fit into how they already work? | Whether users would replace an existing tool, add this to their stack, and pay for it. | When the concept is defined enough to discuss pricing and workflow fit |

Feasibility | Can you actually build this? | Whether technical constraints allow the solution as designed. Especially relevant inside established companies. | Before committing engineering resources to a direction |

You do not need to test all four dimensions at once. In the earliest stages, desirability matters most. Usability and viability become more important as the concept gets more defined.

A product validation scoring framework

One practical approach is to score each prototype or concept version against a set of criteria before deciding whether to continue, pivot, or stop. Here is a lightweight product validation scoring framework you can adapt.

For each criterion, score 1 to 5. A score below 15 is a signal to pivot or stop. A score above 20 suggests you are ready to move to a higher-fidelity version.

Criterion 1: Problem recognition (1 to 5) When you describe the problem, do users recognize it immediately? Do they use the same language you do, or do they look confused? Score higher if the recognition is instant and emotional.

Criterion 2: Current workaround (1 to 5) Are people already trying to solve this problem with something else? An active workaround, even a messy spreadsheet, signals that the pain is real. No workaround at all often means the problem is not urgent enough.

Criterion 3: Prototype comprehension (1 to 5) After seeing your prototype, can users accurately describe what it does? If most users can explain the concept back to you in their own words, score high. If they are confused or invent a different use case, score low.

Criterion 4: Behavioral intent (1 to 5) Do users describe a specific scenario where they would use this? Vague enthusiasm ("this sounds cool") scores low. Specific intent ("I'd use this every time I prep for a sprint review") scores high.

Criterion 5: Objection clarity (1 to 5) Can users articulate what would stop them from adopting this? Clear, specific objections are actually a good sign because they give you something to solve. Vague positivity with no objections usually means people are not taking the question seriously.

How AI prototypes change the validation loop

The argument for AI-assisted prototyping in validation is not about speed for its own sake. It is about getting a higher-quality signal earlier in the process.

When your concept is a sticky note or a written description, stakeholders react to the idea. When it is a clickable prototype, they react to the experience. That is a fundamentally different kind of feedback, and far more useful for making decisions.

Kayan walked through this in the webinar. A team running a design sprint can take their sprint output, including pain points, key insights, solution concepts, and storyboards, feed it directly into Miro, and generate a clickable flow in minutes. That prototype can be shared with a URL, tested in Maze or UserTesting, and iterated on without switching tools or contexts.

"Instead of getting feedback on wireframes, you can get feedback on something higher fidelity," Kayan explained, "so customers feel like they're actually using it. Used to be that you had to wait a week to get a user research prototype spun up. Now you can do it really fast."

Davidsen added an important framing: Miro Prototypes is designed specifically for the early discovery and definition phase, not for production-ready design. The goal is fast, throwaway artifacts that help teams make decisions and align stakeholders. The output is intentionally medium fidelity so that feedback focuses on whether the concept works, not whether the interface looks polished.

Want to see the full workflow in action? Watch the on-demand webinar: From ideas to validated flows: AI prototyping for design and UX teams

The alignment problem that tools alone cannot solve

There is one part of product validation that technology will not automate, and the webinar addressed it directly.

Getting a cross-functional team, including product, engineering, design, marketing, and leadership, to agree on a direction is still fundamentally a human challenge. Kayan shared a quote from Amol Mhatre, Head of Growth at Anthropic, that she said resonated with the designers and PMs she works with consistently: "The one part of product and design work that AI can't automate is getting six people in a room to agree."

This is precisely where visual prototypes do their most important work, beyond user research. They give stakeholders something concrete to react to, rather than asking them to imagine a solution from a written spec. When a team runs a design sprint in Miro, generates a prototype from the sprint output, and shares it in the same session, the nature of the conversation changes. Instead of debating abstract requirements, the team is responding to a real thing they can see and interact with.

The prototype becomes the meeting, not the prep work for the meeting.

What good product validation looks like step by step

Here is a practical sequence that applies whether you are a solo founder or a PM at a larger company.

Step 1: Define your riskiest assumption

Every product idea sits on a stack of assumptions. Your job is to find the one that, if wrong, makes the whole concept pointless. Write it in a single specific sentence. If you find yourself writing something vague like "people want a better way to collaborate," push further. The riskiest assumption is usually about who the user is, what behavior they need to change, or whether the problem is painful enough that they would actually do something about it. If you cannot write it in one sentence, your thinking is not ready yet.

Step 2: Run three to five problem interviews

Talk to people who have the problem before you mention your solution. The goal is not to validate your idea. It is to understand whether the problem is real, how people currently deal with it, and how much it costs them. Ask open questions and listen closely to the words people use. Pay particular attention to workarounds: if someone is already solving this with a spreadsheet or a recurring meeting, that is a strong signal the pain is genuine.

Step 3: Build a visual prototype

Once you believe the problem is real, show your solution rather than describe it. Use Miro Prototypes to build a clickable flow from your board content, whether that is user stories, rough sketches, or design sprint output. Keep the scope tight and the fidelity medium. A prototype that shows the core interaction is more useful right now than one that covers every edge case. Focus on the part of the journey that tests your riskiest assumption.

Step 4: Run a sidekick review

Before you show it to real users, run it past a custom AI Sidekick in Miro configured to act as a critical evaluator. Give it a specific persona that reflects your target user, and ask a focused question, something like "would someone in this role trust this enough to use it for a real workflow?" Upload a persona description or a framework like Nielsen's 10 heuristics to sharpen the output. Address what it surfaces before your first live session, so users spend their time reacting to stronger thinking.

Step 5: Show it to five people who can say no

Showing your prototype to supportive colleagues is a morale exercise, not a validation exercise. The most useful feedback comes from potential customers who have nothing to gain from being kind about it. Aim for five sessions and score each one using the product validation scoring framework covered earlier. Five honest conversations will surface the patterns that matter far more reliably than ten enthusiastic responses from people in your network.

Step 6: Synthesize and decide

Drop your session notes and key quotes into Miro AI workflows to surface recurring themes, objections, and points of confusion quickly. Then look at your scores and make a call: continue if the signal is strong, pivot if users are consistently confused or solving a different problem, stop if the pain turns out not to be urgent enough to change behavior. The hardest part is being honest about what you heard.

Step 7: Share and align

Before you write a spec or start a sprint, share your findings with the people who need to act on them. Use Miro Talktracks to record a walkthrough of your board, so stakeholders can watch in their own time, see the prototype in context, and leave comments without another meeting on the calendar. Arrive at your first sprint with a shared understanding of what you are building and why, grounded in what real users actually said.

Done well, this process takes days, not weeks. And it saves the months you would otherwise spend building the wrong thing.

Q&A with Mathias Davidsen, GM of Prototypes at Miro

Mathias Davidsen leads the Miro Prototypes team, working closely with design and product teams on how AI prototyping fits into real workflows. He joined Miro when it acquired Wizard, one of the earliest AI prototyping tools. Below, he shares his perspective on three questions that come up consistently when founders and product leads start using prototypes for validation.

When vibe coding for alignment, what should founders pay attention to?

The mistake founders make is treating the vibe-coded thing as the output instead of the start of a conversation. Show it rough and early, before you've talked yourself into thinking it's good. The moment you start polishing is usually the moment you stop listening.

Vibe coding also removes the friction that used to catch bad ideas. We call this the velocity trap. When building took two weeks, you thought harder before committing. Now you can ship a wrong idea in an afternoon.

So reintroduce the filter yourself: show it to someone who has the problem you're solving and will actually tell you it's wrong. Not your co-founder. Not your friends.

When setting up AI Sidekicks to evaluate a prototype, what do teams need to consider to make sure they're getting valid feedback?

The biggest mistake is configuring a sidekick that's too generic. "Give feedback on this prototype" gets you polite, structured, totally useless output.

What works is being specific about two things: who the sidekick is simulating, and what question you're trying to answer. Give it a real persona, not "a B2B user" but "a head of product at a 40-person SaaS company who's been burned by tools that don't integrate with their stack." Then ask a specific question: "Would this person trust this enough to run a real session with it?"

You can take this further by uploading actual knowledge to the sidekick: a document describing your target persona in detail, or a framework like Nielsen's 10 UX heuristics. That way the feedback is grounded in real evaluation criteria. This makes all the difference.

And finally: don't configure it to validate your existing belief. Configure it to poke holes.

Beyond Miro, what other tools do you recommend for validating product concepts faster?

Miro is where I would do the visual alignment work: getting flows visible, collecting reactions, running structured feedback with stakeholders. But there are a few other things I'd always have running alongside it.

For synthesis, making sense of interviews, NPS comments, and support tickets, Miro AI workflows or Claude save a lot of time. You can drop in 20 customer quotes and surface the patterns in minutes, faster than doing it in a spreadsheet, and it stays on the same canvas as your prototype work.

For async feedback, Miro Talktracks are underrated. You record a walkthrough directly on the board, stakeholders watch and comment in context, and you get reactions without scheduling five calls. This is especially useful when you're validating with people who are hard to get live.

If you want actual usability data, not "do people like it" but "can people use it," Maze is worth looking at. It runs quick task-based tests with a prototype link and gives you quantitative data on where people get stuck.

And once you've validated the concept and aligned on a direction, hand it off to Lovable, Claude, v0, or Figma to make it real.

Start validating before you build

The teams that consistently ship the right things are not the ones with the biggest budgets or the fastest developers. They are the ones who have learned to test their assumptions cheaply, align their stakeholders early, and treat every prototype as a question rather than an answer.

Product validation does not need to slow you down. Done well, it is actually what lets you move faster, because you are not wasting weeks building something you will have to undo. You are spending a few days making sure what you build is worth building.

The tools are there. Miro Prototypes lets you go from a design sprint or a rough concept to a shareable, clickable flow in minutes, without writing a line of code. You can gather honest feedback, stress-test your assumptions with AI Sidekicks, and bring stakeholders into the conversation before anything is set in stone.

The process is there too. Define your riskiest assumption, show it early, score what you hear, and make a call. Repeat until you are confident.

What is missing, in most cases, is the habit of doing it consistently. That is the part only you can build.

Ready to see how your next idea holds up? Try Miro Prototypes and go from concept to validated flow faster than you thought possible.

FAQ

What is product concept validation?

Product concept validation is the process of testing whether a product idea solves a real problem for real users before committing to building it. It typically involves problem interviews, prototype testing, and structured feedback sessions that help teams identify whether their assumptions are correct.

How do you validate a product idea without a developer?

You can validate a product idea without any development work by using visual prototypes, conversation-based testing, and AI tools. Tools like Miro Prototypes let you build clickable, interactive flows from written concepts or design sprint outputs with no code required. The goal is to test your core assumptions with real users before investing in engineering.

What is product validation testing?

Product validation testing is the practice of running structured experiments to evaluate whether a product concept meets user needs. This includes usability testing (can users figure out how to use it?), desirability testing (do users want it?), and viability testing (would they pay for it and integrate it into their workflow?).

What does a product validation scoring framework look like?

A product validation scoring framework gives you a consistent way to evaluate feedback across user sessions. A basic version scores each session on criteria like problem recognition, current workarounds, prototype comprehension, behavioral intent, and objection clarity, each rated 1 to 5. A combined score helps you decide whether to continue building, pivot, or stop.

What is the difference between idea validation and product validation?

Idea validation tests whether a problem is real and worth solving. Product validation goes further: it tests whether your specific solution addresses that problem in a way users would actually adopt. Both are part of a rigorous build process, but idea validation comes first.

How can Miro help with product validation?

Miro's innovation workspace supports the full validation loop, from design sprints and visual ideation to AI-generated prototypes, custom AI Sidekick reviews, async stakeholder feedback via Talktracks, and synthesis of user research. Teams can go from a design sprint output to a shareable, clickable prototype in minutes. Try Miro Prototypes for free.

Author: Sarah Luisa Santos, Content & Growth @Miro, in collaboration with Mathias Davidsen, GM for Prototypes at Miro Last updated: May 11, 2026