Table of contents

Table of contents

Starbursting brainstorming: Ask the right questions before you build

Summary

Starbursting is a question-first brainstorming technique that helps teams avoid building the wrong thing by systematically exploring problems before jumping to solutions.

Key takeaways:

- What it is: A structured method using a six-point star diagram to generate questions across six categories (Who, What, When, Where, Why, How) instead of immediately seeking answers

- Why it works: Questions expose hidden assumptions, reveal blind spots, and create shared understanding before teams commit time and resources to building

- When to use it: Early product development, complex decision-making, cross-functional alignment, risk identification, and strategic planning—especially for high-stakes or uncertain situations

- How it's different: Traditional brainstorming generates solution ideas; starbursting generates comprehensive questions to understand the problem space first

- Remote team advantage: Works exceptionally well for distributed teams through visual collaboration—everyone contributes simultaneously via sticky notes, no dominant voices, async-friendly

- The process: 90-minute session generating 50+ questions, clustering similar themes, voting on priorities, assigning research owners, and documenting decisions

- Best practice: Combine starbursting (questions first) → research phase (answer critical questions) → traditional brainstorming (generate solutions)

- Common mistakes to avoid: Jumping to answers too soon, stopping at surface-level questions, letting dominant voices take over, treating it as one-time event, trying to answer everything

Bottom line: The questions you ask before building determine what you build. Starbursting gives teams a systematic framework to ask the right questions early enough to act on the answers.

Starbursting technique explained

Your team just spent three months building a feature nobody uses.

It happens more often than anyone wants to admit. Teams jump straight into solution mode—sketching interfaces, writing code, debating color schemes—without stopping to ask the questions that actually matter. Who needs this? What problem does it solve? Why would someone choose this over what they’re already using?

Starbursting helps fill this gap. Instead of rushing to find solutions, you focus on generating questions. These questions help reveal blind spots, challenge assumptions, and prevent you from launching something nobody wants.

And here’s what makes it work for teams right now: You don’t need everyone in the same room. You don’t need to be the loudest person in the meeting to contribute. You just need a structured way to capture the questions that actually move your project forward.

What is starbursting brainstorming?

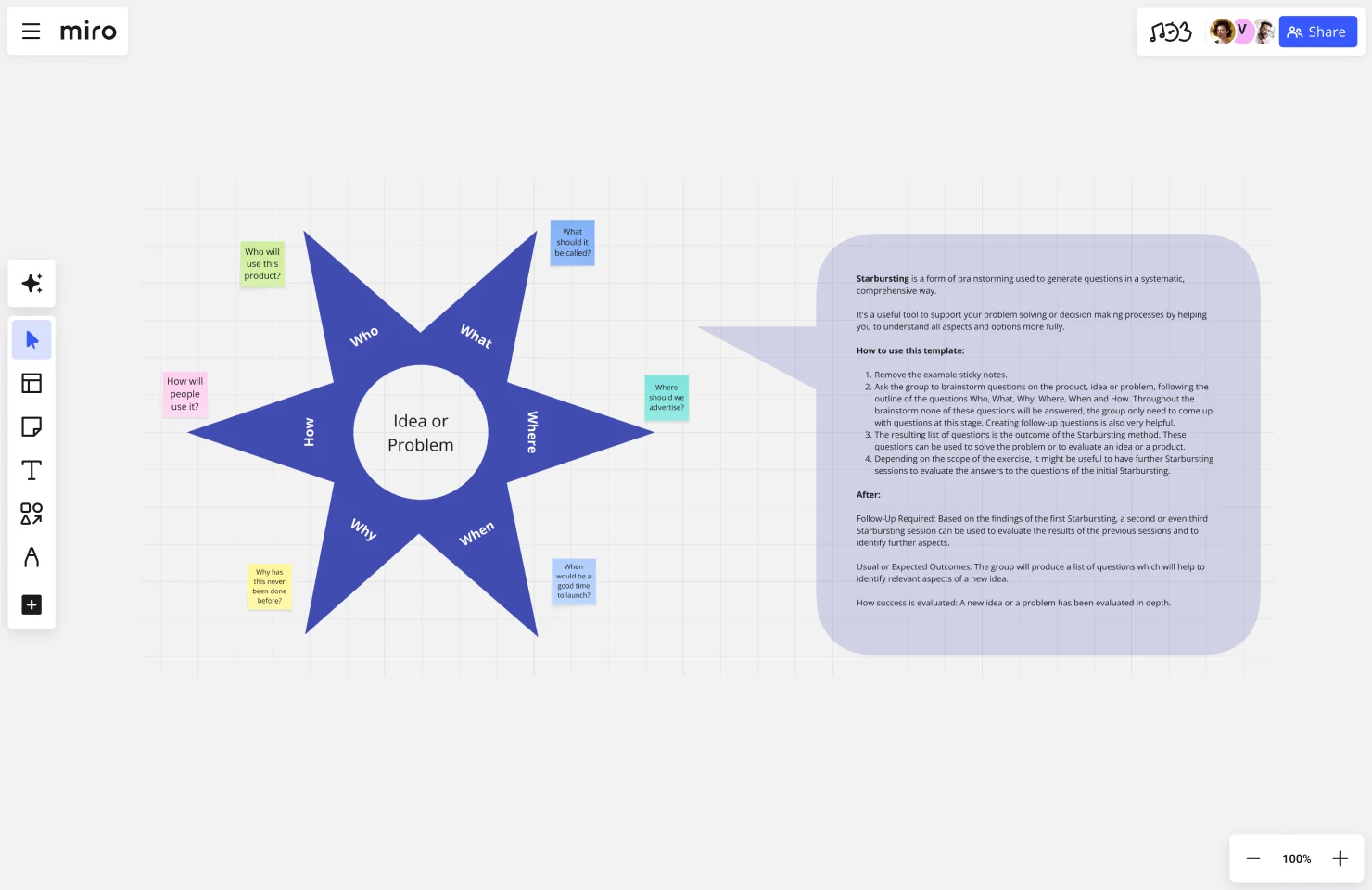

Starbursting is a structured brainstorming technique that generates questions instead of answers.

Here’s how it’s different: Traditional brainstorming asks “What should we build?” Starbursting asks “What do we need to know before we build anything?”

The framework is visual and simple. You draw a six-point star with your idea, product, or problem in the center. Each point of the star represents one question category:

- Who – stakeholders, users, decision-makers, competitors

- What – features, outcomes, alternatives, resources

- When – timing, deadlines, milestones, market windows

- Where – locations, channels, platforms, constraints

- Why – motivations, purpose, value, strategic fit

- How – methods, processes, costs, technical approach

Next, brainstorm questions for each category, not answers. Aim for as many as you can. The more specific your questions, the better.

Why asking questions first actually works

When teams jump straight to solutions, they bring assumptions they don’t even realize they’re making. Questions expose those assumptions. They force your team to confront what you actually know versus what you think you know.

Research on product development failures often points to one main cause: teams didn’t fully understand the problem they were trying to solve. Starbursting is a way to structure curiosity. It helps you make sure you’re building the right thing, not just building anything.

The psychology behind it is straightforward: Questions create cognitive space. When someone makes a statement, the natural response is to agree or disagree. When someone asks a question, the natural response is to think and explore.

When teams use starbursting

Early product development – Before you commit to building a new feature or product, starbursting helps validate whether you’re solving a real problem for real people.

Complex decision-making – When you’re evaluating options with multiple factors (technical, business, user impact), questions help you see the full picture before choosing.

Cross-functional alignment – Product, design, engineering, and marketing all have different perspectives. Starbursting surfaces those differences early, when they’re easy to address.

Risk identification – By asking “What could go wrong?” systematically across all six categories, you spot potential problems before they become expensive mistakes.

Strategic planning – Before launching in a new market, expanding your team, or changing your pricing model, questions reveal what you haven’t considered yet.

Starbursting vs. traditional brainstorming

Here’s the breakdown:

Starbursting vs. Traditional Brainstorming

Traditional Brainstorming | Starbursting |

Traditional Brainstorming | Starbursting |

Generates many possible solutions | Generates comprehensive questions |

Divergent thinking (explore possibilities) | Convergent exploration (understand constraints) |

Fast and energizing | Systematic and thorough |

Risk: groupthink and obvious ideas | Forces examination of assumptions |

Best for: Generating creative options when problem is clear | Best for: Understanding complex, uncertain situations before committing |

Neither approach is “better”—they solve different problems.

Use traditional brainstorming when you have a well-defined problem and need volume of ideas fast. Use starbursting when you’re facing complexity, uncertainty, or high stakes.

The hybrid approach

Smart teams often combine both techniques in sequence:

- Starbursting first – Generate questions to understand the problem space

- Research phase – Answer the most critical questions

- Traditional brainstorming – Generate solution ideas now that you understand what you’re solving

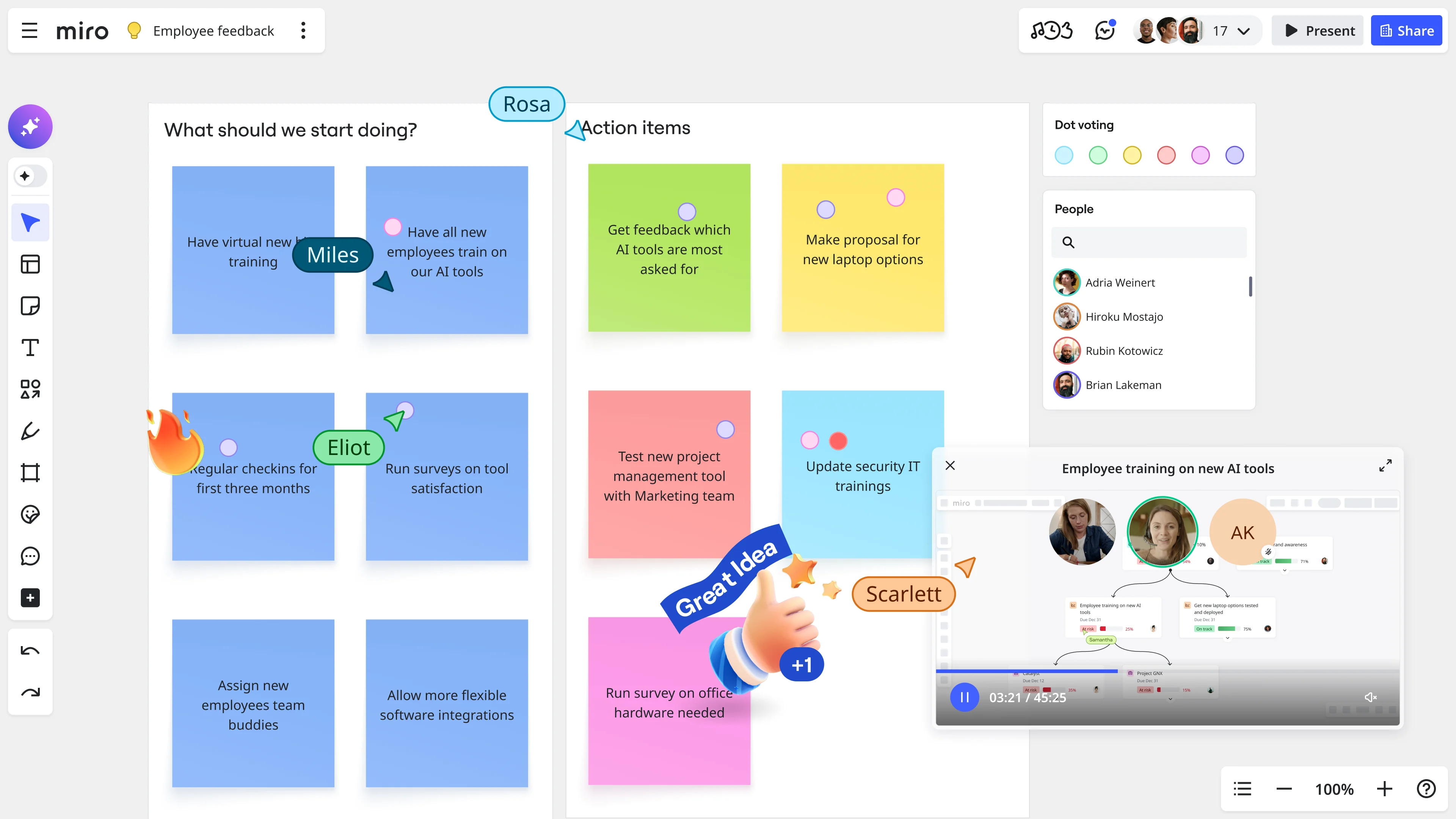

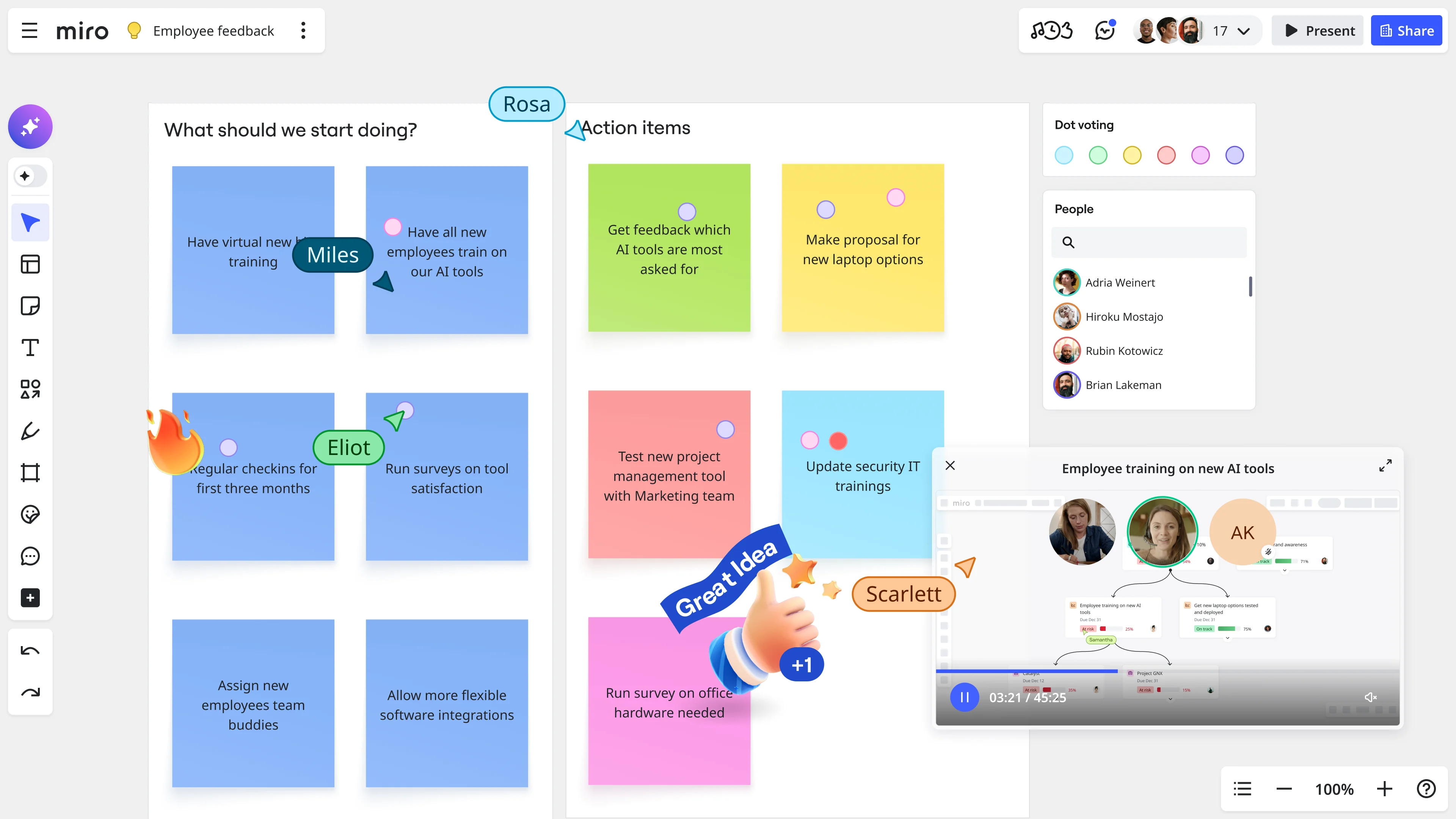

And if you’re using Miro, you can run both techniques on the same infinite canvas—keeping all the context visible as you work.

How to run a starbursting brainstorming session

Here’s exactly how to run an effective starbursting session, whether your team is in the same room or distributed across time zones.

Before the session: Set yourself up for success

1. Get crystal clear on your focus

Write a specific topic or problem statement. Not “improve the onboarding flow” but “Should we add a video walkthrough to our mobile app onboarding?”

The more specific your focus, the better your questions will be.

2. Assemble a cross-functional team (5-8 people ideal)

The power of starbursting comes from diverse perspectives:

- Someone who talks to customers regularly

- Someone who builds the product

- Someone who sells or markets it

- Someone who supports users after they buy

Avoid the trap of all engineers or all designers. Cross-functional means genuinely different viewpoints.

3. Set up your workspace

For remote or hybrid teams, open Miro’s starbursting template. It’s already set up with the six-point star, category labels, and instructions. Your team can start contributing in seconds.

4. Block 90 minutes on everyone’s calendar

- 5 min: Setup and explanation

- 40 min: Generate questions

- 20 min: Cluster and prioritize

- 15 min: Discuss key questions

- 10 min: Capture next steps

During the session: The 5-step process

Step 1: Set Up Your Star (5 minutes)

If you’re using Miro’s starbursting template, this step is already done. Write your topic or problem in the center of the star.

Facilitator’s role: Explain the ground rules:

- We’re generating questions only—no answers yet

- All questions are valid (even obvious ones)

- Quantity matters (aim for 50+ total questions)

- Push past surface-level questions to deeper ones

Step 2: Generate Questions—Not Answers (40 minutes)

Go category by category, spending about 7 minutes on each.

Critical rule: Questions only. Every time someone starts answering, redirect them.

Here’s what good questions look like:

WHO Questions:

- Who will actually use this every day?

- Who has the budget authority to approve this?

- Who might resist this change?

- Who are we competing against?

- Who will maintain this after launch?

WHAT Questions:

- What problem does this solve that nothing else solves?

- What are must-have features versus nice-to-haves?

- What could go wrong?

- What resources do we need that we don’t have?

- What metrics will tell us if this is working?

WHEN Questions:

- When do users need this most urgently?

- When can we realistically ship something?

- When is the competitive window?

- When should we test with users?

WHERE Questions:

- Where will users access this?

- Where are the technical constraints?

- Where does this fit in our roadmap?

- Where are competitors falling short?

WHY Questions:

- Why now instead of next quarter?

- Why us instead of a competitor?

- Why would someone choose this over what they use today?

- Why might this fail?

- Why is this our priority?

HOW Questions:

- How will we build this technically?

- How will we market and sell this?

- How much will it cost?

- How does this scale?

- How do we validate demand before building?

For remote teams using Miro:

Everyone adds questions as sticky notes simultaneously. No waiting for your turn.

Here’s how to make it work:

- Use different colored sticky notes for each category

- Set a timer for each section

- Everyone works at the same time

- @mention teammates when you want their input

And here’s where Miro AI helps: If your team is stuck, Miro AI can analyze the questions you’ve already added and suggest additional ones you might have missed. It identifies gaps in your thinking—question categories where you haven’t dug deep enough yet.

Facilitation tips:

Push past obvious questions: When someone asks “Who is our target user?”, follow up with “Who specifically within that group?”

Use silence productively: After someone adds a question, pause for 10 seconds. That’s when the deeper questions emerge.

Watch for patterns: If all questions are positive, push the team to ask about failure modes.

Timebox each category: When time’s up, move on.

Step 3: Cluster and Prioritize (10 minutes)

Group similar questions together. Drag related sticky notes close to each other. You’ll see themes emerge.

Vote on the most critical questions. In Miro, use the voting feature. Everyone gets 5-7 votes to place on the questions that are most important to answer right now.

Focus on questions that are:

- Decision-blockers (we can’t move forward without answering these)

- High-impact (the answer significantly changes what we build)

- Urgent (we need the answer soon)

Step 4: Tackle High-Priority Questions (15 minutes)

Pick the top 3-5 questions and start discussing answers.

Here’s what you’re looking for:

- What do we already know? Sometimes the answer is in the room.

- What can we decide now? Make the call and document it.

- What needs research? Flag it and assign an owner.

Document your answers right on the Miro board in a different color.

For questions that need research, be specific:

- Who’s responsible?

- What’s the deadline?

- What kind of answer do we need?

Step 5: Define Next Steps (10 minutes)

Before anyone leaves:

Assign owners to the questions that need research. Every question needs a name next to it.

Schedule the follow-up session. Put it on the calendar now.

Link to your project tools. In Miro, you can integrate directly with Jira, Asana, and other tools so your questions flow into execution.

Share the board with stakeholders who couldn’t attend.

After the session: Keep the momentum

Starbursting isn’t a one-and-done exercise. The board you created is a living document.

Add new questions as they emerge. As you do research or start building, new questions will come up.

Update answers as you learn. When you validate an assumption or get user feedback, go back and update the answer.

Reference it in decision discussions. When someone proposes a change, go back to the starbursting board.

Link from your requirements docs. Your PRD should reference your starbursting session.

Starbursting for distributed and hybrid teams

Remote brainstorming is hard. Some people dominate Zoom calls. Introverts don’t speak up. Ideas get lost in Slack threads. Time zones make synchronous meetings nearly impossible.

Starbursting solves most of these problems naturally—especially when you run it on a visual collaboration platform.

Why starbursting works especially well for remote teams

The structure prevents rambling. When you’re going category by category, the conversation stays focused.

Questions feel less intimidating than “pitch your brilliant idea.” Asking “What could go wrong?” is lower stakes than declaring “Here’s my revolutionary concept.”

Written contributions give everyone equal voice. The quietest person can contribute just as much as the loudest.

It’s async-friendly. Unlike rapid-fire brainstorming that requires real-time participation, starbursting can happen over 24 hours.

Parallel contribution is natural. Ten people can add questions simultaneously.

Common mistakes and how to fix them

Mistake #1: Jumping to answers too soon

The problem: Someone asks “Who is our target user?” and immediately people start describing demographics.

How to fix it: The facilitator redirects: “That’s valuable insight—but right now we’re staying in question mode. Can you turn that into another question?”

Mistake #2: Stopping at surface-level questions

The problem: Questions like “Who is our target market?” without digging deeper.

How to fix it: After someone asks a question, prompt: “What else would we need to know about that?” Set a minimum per category (8-10 questions).

Mistake #3: Letting dominant voices take over

The problem: Two or three people contribute most questions.

How to fix it: Start with silent contribution—everyone adds sticky notes independently for 5 minutes first. Use round-robin format. In Miro, parallel contribution naturally reduces dominance.

Mistake #4: Treating starbursting as a one-time event

The problem: Great session, then the board sits untouched for months.

How to fix it: Treat your starbursting board as a living document. Return to it during sprint planning, after user testing, when making decisions. Update it as you learn.

Mistake #5: Trying to answer every single question

The problem: Team tries to answer all 60 questions.

How to fix it: Prioritize ruthlessly. Answer Tier 1 decision-blockers first. Some questions just need acknowledgment, not answers.

Mistake #6: Wrong team composition

The problem: Starbursting with only engineers or only designers.

How to fix it: Cross-functional is non-negotiable. Include customer-facing roles, builders, marketers, and business stakeholders.

Mistake #7: No follow-through

The problem: Amazing session, then silence. Nobody researches the answers.

How to fix it: Assign specific owners with deadlines before the session ends. Schedule the follow-up meeting immediately. Link to project management systems so questions become tracked work items.

Real-world examples

Example 1: SaaS Product Team

A mid-size project management company was debating whether to add AI-powered task prioritization.

What starbursting revealed:

- WHO questions uncovered that power users needed different features than casual users

- WHAT questions showed customers valued accuracy over speed (opposite of their assumption)

- WHEN questions exposed that Monday morning was the critical moment, not real-time

- WHERE questions surfaced mobile technical constraints

- WHY questions challenged whether this was differentiation or table stakes

- HOW questions led to phased rollout instead of big-bang launch

Outcome: Scaled down scope to focus on power users on desktop first. Ran closed beta before committing. Saved an estimated 6 months of building the wrong thing.

Example 2: Marketing Team

E-commerce company planning European expansion.

What starbursting revealed:

- WHO questions identified three distinct customer segments, not one generic “European customer”

- WHAT questions uncovered regulatory complexity around sustainability claims

- WHEN questions showed a competitor launching similar products in Q2

- WHERE questions revealed weak email infrastructure that needed building first

- WHY questions forced honest discussion about competitive positioning

- HOW questions exposed that multi-country launch wasn’t realistic

Outcome: UK-only launch with segmented messaging. Used learnings to refine approach for subsequent countries.

How Miro makes starbursting work for teams

1. Start instantly with templates

Miro’s starbursting template is ready in 60 seconds. No drawing, no setup.

2. Everyone contributes simultaneously

Ten people add sticky notes at once. Parallel contribution is faster and more inclusive.

3. Spatial organization reveals patterns

Cluster related questions visually. See connections that lists hide. Use color-coding, arrows, frames.

4. AI helps you think deeper

Miro AI analyzes your questions and suggests ones you missed. It identifies gaps and spots patterns.

5. Async and sync work together

London team adds questions Monday morning. SF team builds on them Monday afternoon. Tokyo team reviews Tuesday morning. Then sync up for 30 minutes to prioritize.

6. It connects to your workflow

Link questions to research in Google Drive. Create Jira tickets from priorities. Embed technical diagrams. Pull in customer data. Your starbursting board becomes the hub connecting everything.

7. Living documentation

Update answers as you learn. Add questions when they emerge. Search finds “that pricing question from two months ago” instantly. Version history shows how thinking evolved.

Getting started: Your first session

Pick a decision and run your first session this week.

Choose the right first project

Good first projects:

- Deciding whether to build a new feature

- Planning a campaign or initiative

- Evaluating a vendor or technology choice

- Solving a recurring customer problem

Look for:

- Medium complexity (not trivial, not overwhelming)

- Decision needed in 2-4 weeks

- Multiple perspectives add value

- Medium stakes (worth doing well, not career-ending if imperfect)

Prepare your team (15 Minutes)

Send a calendar invite with a short pre-read explaining starbursting and the Miro board link.

Set expectations: This is collaborative. Everyone contributes actively.

Run a tight session

Start on time. Explain the rules clearly. Keep momentum with timers. Celebrate good questions. End with clear next steps—who’s doing what by when.

After your first session

Quick retrospective: What worked? What would you change?

Share outcomes broadly. When starbursting prevents a mistake, tell that story.

Make it a habit for major decisions.

Track your wins: “Questions we asked that changed what we built.”

The questions you ask determine what you build

Your team has the talent to build almost anything. That’s not the constraint.

The constraint is knowing what to build. Who needs it? Why would they choose it? How much will it cost? When is the right timing? What could go wrong?

Those questions—the ones you ask before you write code or create mockups—determine whether you ship something valuable or something that sits unused.

Starbursting is a framework for asking those questions systematically. Six categories, dozens of questions per category, prioritized by what matters most.

It works for in-person teams. It works better for distributed teams when you use visual collaboration to level the playing field.

The best product teams aren’t the ones who move fastest. They’re the ones who ask the right questions early enough to actually act on the answers.

Ready to try starbursting?

Open Miro’s starbursting template, add your topic, invite your team, and spend 60 minutes asking questions about a decision you’re facing.

The questions you uncover might save you from building the wrong thing.

Starbursting technique FAQs

1. How is starbursting different from regular brainstorming?

Traditional brainstorming focuses on generating as many solution ideas as possible—"What should we build?" Starbursting flips this by generating questions first—"What do we need to know before we build anything?" While regular brainstorming uses divergent thinking to explore possibilities, starbursting uses convergent exploration to understand constraints, assumptions, and blind spots. Use traditional brainstorming when your problem is clear and you need creative options. Use starbursting when you're facing complexity, uncertainty, or high stakes and need to understand the problem thoroughly before committing resources.

2. Can starbursting work for remote and distributed teams?

Absolutely—starbursting actually works better for remote teams than traditional brainstorming. The structured format prevents rambling discussions, and written contributions (sticky notes) give everyone equal voice regardless of personality type or time zone. In Miro, team members can contribute simultaneously without waiting for their turn, making parallel contribution faster and more inclusive. You can run starbursting fully synchronous (video call + live collaboration), fully asynchronous (team adds questions over 24-48 hours), or hybrid (async pre-work, sync discussion, async research). The visual format maintains context that gets lost in email threads or documents.

3. How do Miro's AI capabilities enhance starbursting sessions?

Miro AI analyzes the questions your team generates and provides intelligent assistance in several ways. It identifies gaps by highlighting question categories where you haven't explored deeply enough (for example: "You've asked 15 WHO questions but only 2 WHY questions"). It suggests additional questions you might have missed based on your topic and existing questions. AI can automatically cluster similar questions by theme, saving manual organization time. It also recognizes patterns, such as when multiple questions relate to the same underlying concern like technical feasibility or competitive positioning. This helps teams push past obvious questions to uncover deeper insights and blind spots.

4. What Miro integrations support starbursting workflows?

Miro integrates with the tools teams use daily to turn starbursting questions into actionable work. Connect to Jira or Asana to create tracked tasks directly from prioritized questions. Link to Google Drive, Dropbox, or OneDrive to attach research findings, user interview notes, or competitive analysis. Integrate with Slack or Microsoft Teams to share board updates and get team input. Sync with Zoom or Microsoft Teams for seamless video collaboration during sessions. Connect to Azure DevOps for engineering teams managing technical decisions. These integrations ensure your starbursting insights flow directly into execution rather than staying isolated on a whiteboard that gets forgotten.

5. Are there community templates or examples I can use?

Yes! Miro's starbursting template is ready to use at miro.com/templates/starbursting. Beyond the official template, explore Miroverse—Miro's community template gallery—where teams worldwide share their customized starbursting frameworks for specific use cases like product launches, technical architecture decisions, marketing campaigns, and strategic planning. You can browse templates by industry, team type, or methodology. The community also shares real examples showing how different teams adapted starbursting for their specific needs, giving you proven starting points rather than building from scratch.

6. How long does a typical starbursting session take?

A comprehensive starbursting session typically takes 90 minutes: 5 minutes for setup and explanation, 40 minutes generating questions (about 7 minutes per category), 10 minutes clustering and prioritizing, 15 minutes discussing high-priority questions, and 10 minutes capturing next steps. However, you can adapt timing based on your needs. Quick sessions focusing on one or two question categories might take 30-45 minutes. Complex strategic decisions might warrant multiple sessions—an initial 90-minute question generation session, a research phase over 1-2 weeks, then a 60-minute follow-up to review findings and make decisions. The hybrid async-sync approach spreads the work over several days while keeping focused discussion time short.

7. Is my team's starbursting content secure in Miro?

Yes. Miro provides enterprise-grade security for all boards, including starbursting sessions that may contain sensitive product plans, competitive strategy, or confidential business information. Features include end-to-end encryption for data in transit and at rest, SOC 2 Type II certification, GDPR compliance, and customizable access controls so you can limit who sees specific boards. Enterprise plans offer single sign-on (SSO), advanced admin controls, audit logs to track who accessed or modified boards, and options for private team spaces. You can also set boards to private, team-only, or organization-wide visibility. For highly sensitive strategic planning, Miro supports on-premise deployment options for enterprise customers with strict data residency requirements.

8. What if my team gets stuck or runs out of questions during a session?

Running out of questions usually means you're stopping at surface level. Here's how to push deeper: Use follow-up prompts like "What else would we need to know about that?" or "What's the question underneath that question?" Try the "5 Whys" approach—keep asking why to get to root motivations. Look at questions from different stakeholder perspectives (customer, engineer, support team, executive). Consider failure modes: "What could make this technically unfeasible?" or "Why might users ignore this?" If you're truly stuck, Miro AI can analyze your existing questions and suggest additional angles you haven't explored. Set a minimum threshold (8-10 questions per category) before moving on. Remember: the first 3 questions are usually obvious—questions 4-10 are where you find the insights that matter.

Author: The Miro team Last updated: January 13, 2026